Twenty years ago on Dec. 31, the world at large waited with anticipation. When the clock struck midnight and the calendar changed from 1999 to 2000, would planes still fly? Would the banking system collapse? Would there be riots? Dogs and cats living together? Mass hysteria? Would our computers still work?

Spoiler alert: They did.

"A lot of money was spent. A lot of time was spent. A lot of effort went into solving the problem," says Dave Hatter, cybersecurity expert and adjunct professor at Cincinnati State. "Here we are 20 years later, thankfully, none of the dire predictions of doom actually occurred."

The problem was commonly called the Y2K bug, and it sprung from the early days of computing.

Hatter says the first computers were huge, and expensive, and keeping data was expensive, too. "Programs were written to try to control how much memory was used," he says. "One of the ways they accomplished that was to store as little data as possible. In particular, with dates, if it's 1962, you've got a long time to worry about when you're going to hit 2000. You didn't need to store the 19, you'd just store the 62, for example."

The problem with that was that computers couldn't tell if that 62 was in the 20th or 21st century. "People started raising concerns that we only have 10 to 15 years to resolve this problem or critical systems in the financial industry - utilities, that sort of thing - if they can't figure out what the date is, they would potentially have all kinds of problems," Hatter says.

Computer systems that were built closer to 2000 didn't have the problem. Hatter says it was primarily a feature of the older, legacy systems. "A lot of companies had to go through their systems and try to determine if they were susceptible to this," he says.

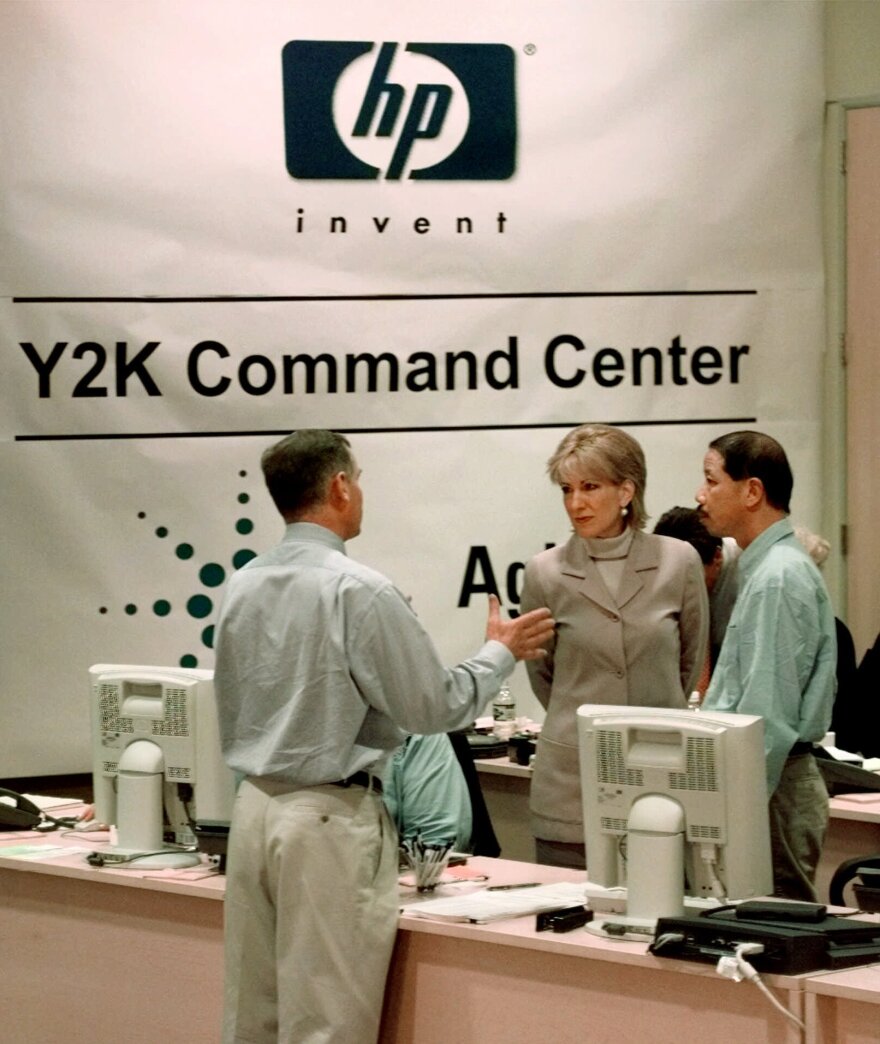

Spending To Save

A lot of money and a lot of hours were spent rewriting computer code.

"Anytime you start changing legacy code, particularly if you're not the person who originally wrote it, the likelihood of having a full comprehensive understanding of what it does is pretty slim. You make a change here, and it breaks something over there," Hatter says. "Had these systems not been updated you might have seen some relatively catastrophic things simply because if the system doesn't know what the date is, and it has functionality that's dependent upon the date, you may get unpredictable results."

Programming has changed a lot since computers started appearing in workplaces. Hatter says computer power and memory have become less expensive. So, programmers don't have to worry about saving space, and lop off two digits from a date. But Y2K did have a lasting influence.

"In 1960, thinking about the year 2000, 40 years away, computers were still fairly new," Hatter says. "You didn't know whether this stuff would still be in use. Now, here we are all this time later, a lot of these legacy systems are still around. It's forced programmers to think more long term: What about the life span of this code? Will it still be around in 20 or 30 years? Am I taking short cuts now that could cause some kind of problem 20 or 30 years in the future?"

Hatter says the run up to 2000 was an interesting time to be in programming. He says a lot of people made a lot of money, but put in a lot of work making sure everything continued to operate as needed. He says the hype around the Y2K bug was probably overblown, but admits, "if you don't perhaps add a little fear into the mix, people won't take it seriously until it's too late."